High performance containers

Overview

Teaching: 15 min

Exercises: 15 minQuestions

How can I run an MPI enabled application in a container using a bind approach?

How can I run a GPU enabled application in a container?

Objectives

Discuss the bind approach for containerised MPI applications, including its performance

Get started with containerised GPU applications

Run-real world MPI/GPU examples using OpenFoam, Gromacs and Tensorflow

NOTE: the following hands-on session focuses on Singularity only.

Configure the MPI/interconnect bind approach

Before we start, let us cd to the openfoam example directory, adjust the PATH, and make sure this repo is up to date:

cd ~/isc-tutorial/exercises/openfoam

OLDPATH=$PATH

PATH=/opt/intel/oneapi/mpi/latest/bin:$PATH

git checkout isc24

git pull

Now, suppose you have an MPI installation in your host and a containerised MPI application, built upon MPI libraries that are ABI compatible with the former.

For this tutorial, we do have MPICH installed on the host machine:

which mpirun

/opt/intel/oneapi/mpi/latest/bin/mpirun

and we’re going to pull an OpenFoam container, which was built on top of MPICH as well:

singularity pull library://marcodelapierre/beta/openfoam:v2012

Note that this image has already been pulled on the tutorial VMs. OpenFoam comes with a collection of executables, one of which is simpleFoam. We can use the Linux command ldd to investigate the libraries that this executable links to. As simpleFoam links to a few tens of libraries, let’s specifically look for MPI (libmpi*) libraries in the command output:

singularity exec openfoam_v2012.sif bash -c 'ldd $(which simpleFoam) |grep libmpi'

libmpi.so.12 => /usr/lib/libmpi.so.12 (0x00007f4a93ff8000)

This is the container MPI installation that was used to build OpenFoam.

How do we setup a bind approach to make use of the host MPI installation?

We can make use of Singularity-specific environment variables, to make these host libraries available in the container (see location of MPICH from which mpirun above):

export SINGULARITY_BINDPATH="/opt/intel/oneapi"

export SINGULARITYENV_LD_LIBRARY_PATH="/opt/intel/oneapi/mpi/latest/lib/release:/opt/intel/oneapi/mpi/latest/lib/libfabric:\$LD_LIBRARY_PATH"

Note the “escaping” of the dollar sign at the end. This is to keep the explicit name of the LD_LIBRARY_PATH within the definition (and not the evaluation of its value in the host).

Display the variables

So the environment variable looks like this in the host:

echo $SINGULARITYENV_LD_LIBRARY_PATH/opt/intel/oneapi/mpi/latest/lib/release:/opt/intel/oneapi/mpi/latest/lib/libfabric:$LD_LIBRARY_PATHThen, the evaluation of

LD_LIBRARY_PATHwithin the container has, at the beginning, the additional path for the libraries in the host (as intended). And the rest is the original definition of the variable within the container (as intended).singularity exec openfoam_v2012.sif bash -c 'echo $LD_LIBRARY_PATH'/opt/intel/oneapi/mpi/latest/lib/release:/opt/intel/oneapi/mpi/latest/lib/libfabric:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64Gcc/fftw-3.3.7/lib64:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64Gcc/CGAL-4.12.2/lib64:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64Gcc/boost_1_66_0/lib64:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64Gcc/ADIOS2-2.6.0/lib:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64Gcc/ParaView-5.6.3/lib:/opt/OpenFOAM/OpenFOAM-v2012/platforms/linux64GccDPInt32Opt/lib/sys-mpi:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64GccDPInt32/lib/sys-mpi:/home/ofuser/OpenFOAM/ofuser-v2012/platforms/linux64GccDPInt32Opt/lib:/2012/platforms/linux64GccDPInt32Opt/lib:/opt/OpenFOAM/OpenFOAM-v2012/platforms/linux64GccDPInt32Opt/lib:/opt/OpenFOAM/ThirdParty-v2012/platforms/linux64GccDPInt32/lib:/opt/OpenFOAM/OpenFOAM-v2012/platforms/linux64GccDPInt32Opt/lib/dummy:/.singularity.d/libs:/.singularity.d/libs

When a spack installation of mpich is in place

NOTE: This part is for informational purposes.

Paths are a bit longer, but procedure is the same:

which mpirun/opt/spack/opt/spack/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/s/linux-amzn2-x86_64/gcc-7.3.1/mpich-3.3.2-q546vjf5teafqxsttapjokurnarsx5iz/bin/mpirunexport SINGULARITY_BINDPATH="/opt/spack" export SINGULARITYENV_LD_LIBRARY_PATH="/opt/spack/opt/spack/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/s/linux-amzn2-x86_64/gcc-7.3.1/mpich-3.3.2-q546vjf5teafqxsttapjokurnarsx5iz/lib:/opt/spack/opt/spack/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/spack_path_placeholder/s/linux-amzn2-x86_64/gcc-7.3.1/mpich-3.3.2-q546vjf5teafqxsttapjokurnarsx5iz//lib/libfabric:\$LD_LIBRARY_PATH"

Now, if we inspect mpirun dynamic linking again:

singularity exec openfoam_v2012.sif bash -c 'ldd $(which simpleFoam) |grep libmpi'

libmpi.so.12 => /opt/intel/oneapi/mpi/latest/lib/release/libmpi.so.12 (0x00007f5f9eafd000)

Now OpenFoam is picking up the host MPI libraries!

Note that, on a HPC cluster, with the same mechanism it is possible to expose the host interconnect libraries in the container, to achieve maximum communication performance.

Let’s run OpenFoam in a container!

To get the real feeling of running an MPI application in a container, let’s run a practical example.

We’re using OpenFoam, a widely popular package for Computational Fluid Dynamics simulations, which is able to massively scale in parallel architectures up to thousands of processes, by leveraging an MPI library.

The sample inputs come straight from the OpenFoam installation tree, namely $FOAM_TUTORIALS/incompressible/pimpleFoam/LES/periodicHill/steadyState/.

Before getting started, let’s make sure that no previous output file is present in the exercise directory:

./clean-outputs.sh

Now, let’s execute the script in the current directory:

./mpirun_intel.sh

This will take a few minutes to run. In the end, you will get the following output files/directories:

ls -ltr

total 1121572

-rwxr-xr-x. 1 tutorial livetau 1148433339 Nov 4 21:40 openfoam_v2012.sif

drwxr-xr-x. 2 tutorial livetau 59 Nov 4 21:57 0

-rw-r--r--. 1 tutorial livetau 798 Nov 4 21:57 slurm_pawsey.sh

-rwxr-xr-x. 1 tutorial livetau 843 Nov 4 21:57 mpirun.sh

-rwxr-xr-x. 1 tutorial livetau 197 Nov 4 21:57 clean-outputs.sh

-rwxr-xr-x. 1 tutorial livetau 1167 Nov 4 21:57 update-settings.sh

drwxr-xr-x. 2 tutorial livetau 141 Nov 4 21:57 system

drwxr-xr-x. 4 tutorial livetau 72 Nov 4 22:02 dynamicCode

drwxr-xr-x. 3 tutorial livetau 77 Nov 4 22:02 constant

-rw-r--r--. 1 tutorial livetau 3497 Nov 4 22:02 log.blockMesh

-rw-r--r--. 1 tutorial livetau 1941 Nov 4 22:03 log.topoSet

-rw-r--r--. 1 tutorial livetau 2304 Nov 4 22:03 log.decomposePar

drwxr-xr-x. 8 tutorial livetau 70 Nov 4 22:05 processor1

drwxr-xr-x. 8 tutorial livetau 70 Nov 4 22:05 processor0

-rw-r--r--. 1 tutorial livetau 18583 Nov 4 22:05 log.simpleFoam

drwxr-xr-x. 3 tutorial livetau 76 Nov 4 22:06 20

-rw-r--r--. 1 tutorial livetau 1533 Nov 4 22:06 log.reconstructPar

We ran using 2 MPI processes, who created outputs in the directories processor0 and processor1, respectively.

The final reconstruction creates results in the directory 20 (which stands for the 20th and last simulation step in this very short demo run), as well as the output file log.reconstructPar.

As execution proceeds, let’s ask ourselves: what does running singularity with MPI look run in the script? Here’s the script we’re executing:

#!/bin/bash

NTASKS="2"

image="library://marcodelapierre/beta/openfoam:v2012"

# this configuration depends on the host

PATH=/opt/intel/oneapi/mpi/latest/bin:/bin:/usr/bin:/usr/local/bin

export MPICH_ROOT="/opt/intel/oneapi"

export MPICH_RUN="$( which mpirun )"

export MPICH_BASE="${MPICH_RUN%/bin/mpirun*}"

export SINGULARITY_BINDPATH="$MPICH_ROOT"

export SINGULARITYENV_LD_LIBRARY_PATH="$MPICH_BASE/lib/release:$MPICH_BASE/libfabric/lib:\$LD_LIBRARY_PATH"

# pre-processing

singularity exec $image \

blockMesh | tee log.blockMesh

singularity exec $image \

topoSet | tee log.topoSet

singularity exec $image \

decomposePar -fileHandler uncollated | tee log.decomposePar

# run OpenFoam with MPI

mpirun -n $NTASKS \

singularity exec $image \

simpleFoam -fileHandler uncollated -parallel | tee log.simpleFoam

# post-processing

singularity exec $image \

reconstructPar -latestTime -fileHandler uncollated | tee log.reconstructPar

In the beginning, Singularity variable SINGULARITY_BINDPATH and SINGULARITYENV_LD_LIBRARY_PATH are defined to setup the bind approach for MPI.

Then, a bunch of OpenFoam commands are executed, with only one being parallel:

mpirun -n $NTASKS \

singularity exec $image \

simpleFoam -fileHandler uncollated -parallel | tee log.simpleFoam

That’s as simple as prepending mpirun to the singularity command line, as for any other MPI application.

Singularity interface to Slurm

Now, have a look at the script variant for the Slurm scheduler, slurm_pawsey.sh:

srun -n $SLURM_NTASKS \

singularity exec $image \

simpleFoam -fileHandler uncollated -parallel | tee log.simpleFoam

The key difference is that every OpenFoam command is executed via srun, i.e. the Slurm wrapper for the MPI launcher, mpirun. Other schedulers will require a different command.

In practice, all we had to do was to replace mpirun with srun, as for any other MPI application.

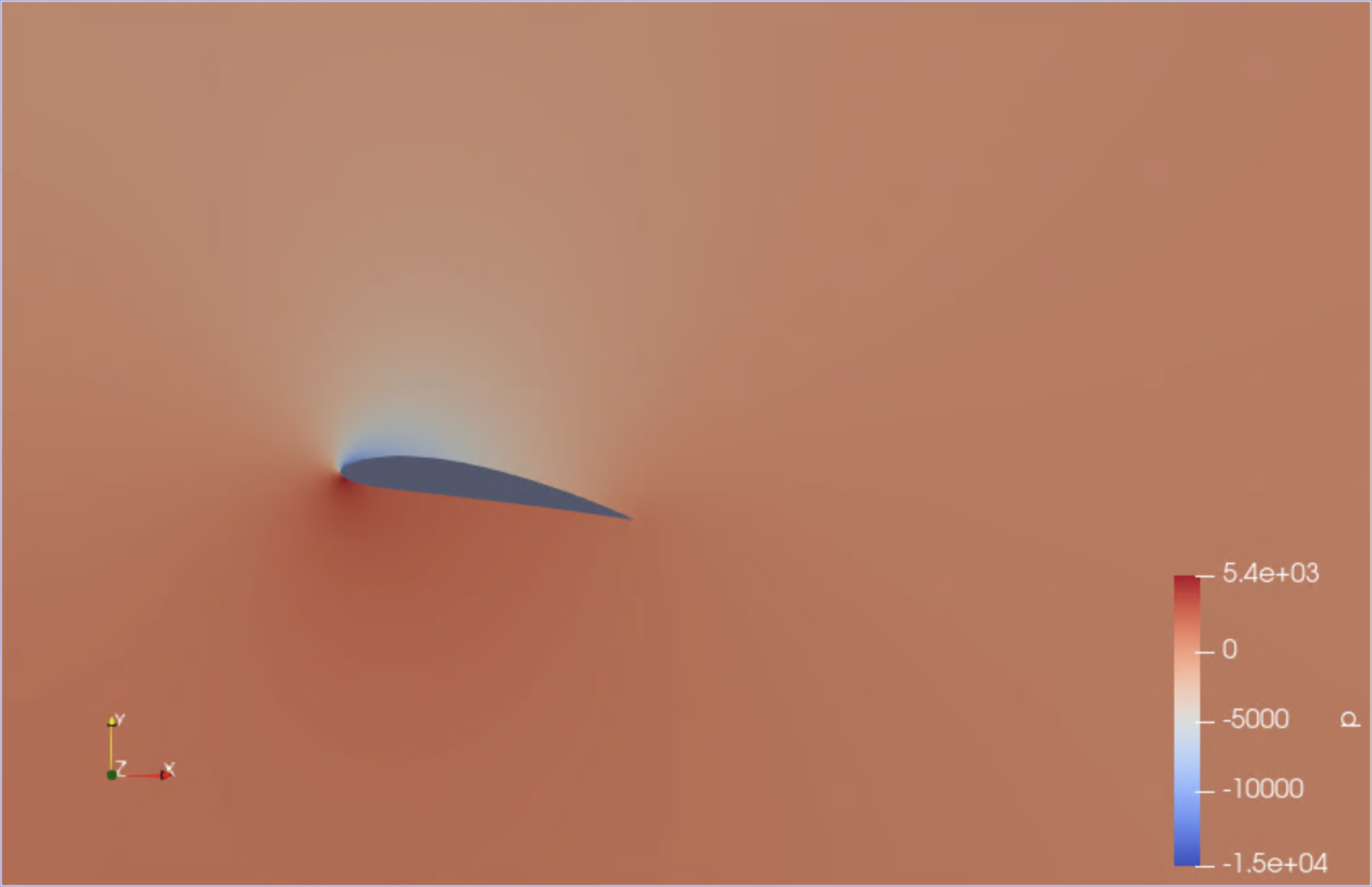

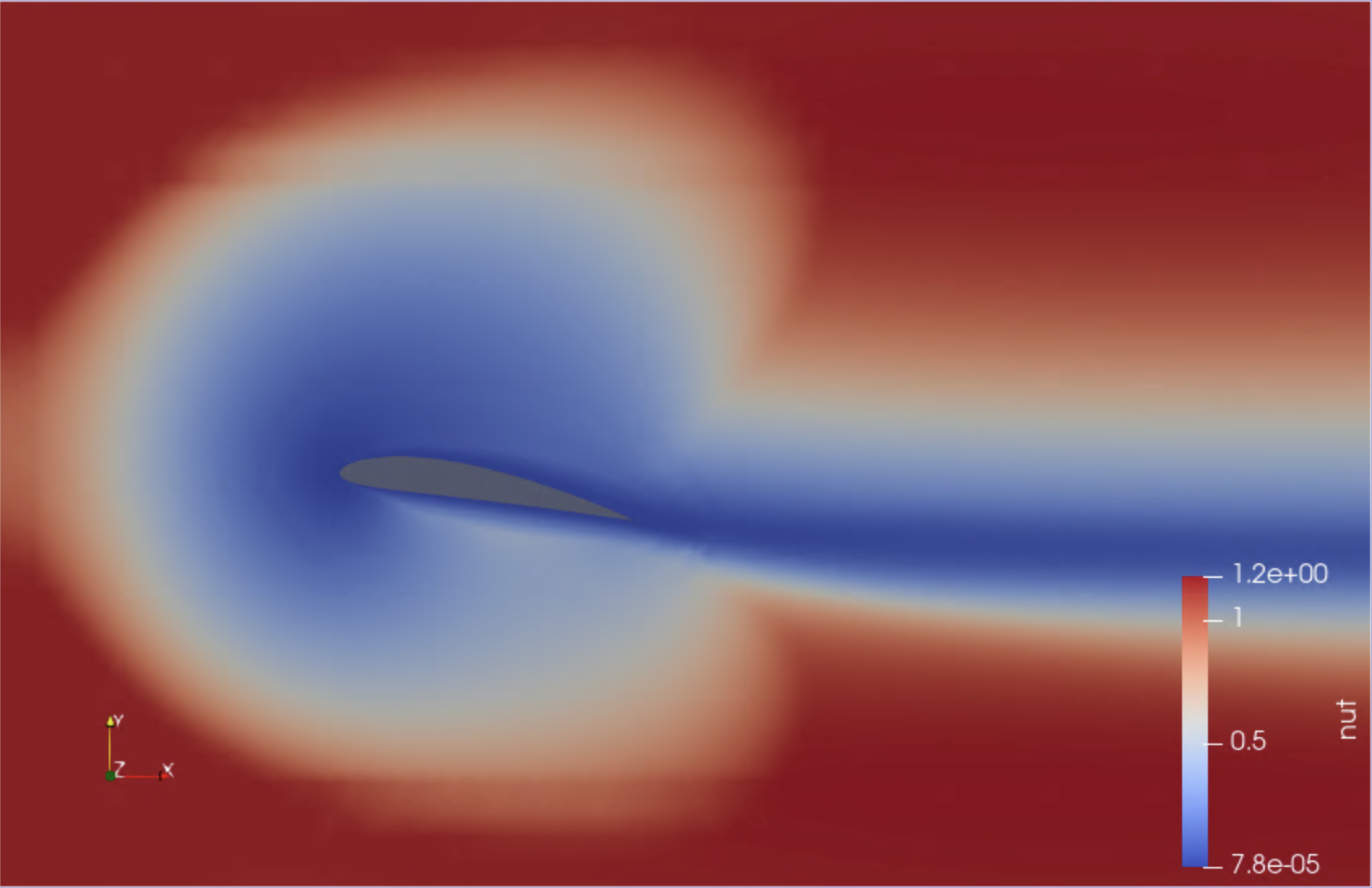

Bonus: a second OpenFoam example with visual output

If time allows, you may want to try out a second simulation, which models the air flow around a two-dimensional wing profile. This example has been kindly contributed by Alexis Espinosa at Pawsey Centre.

The required setup is as follows (a Slurm setup is also available in the alternate directory slurm_pawsey):

cd ~/isc-tutorial/exercises/openfoam_wing/mpirun

./mpirun_intel.sh

This run uses 4 MPI processes and takes about 5-6 minutes. Upon completion, the file wingMotion2D_pimpleFoam/wingMotion2D_pimpleFoam.foam can be opened with the visualisation package Paraview, which is available for this training.

To launch Paraview, use your web browser to open the page https://tut<XXX>.supercontainers.org:8443/#e4s, and login with your training account details. Once you have got the Linux desktop, open up a terminal window and execute paraview. Use the File Open menu to find and open the .foam file mentioned above. Finally, follow the presenter’s instructions to visualise the simulation box. Here are a couple of snapshots:

|

|

|---|

We have just visualised the results of this containerised simulation!

DEMO: Container vs bare-metal MPI performance

NOTE: this part was executed on the Pawsey Zeus cluster. You can follow the outputs here.

Pawsey Centre provides a set of MPI base images, which also ship with the OSU Benchmark Suite. Let’s use it to get a feel of what it’s like to use or not to use the high-speed interconnect.

We’re going to run a small bandwidth benchmark using the image quay.io/pawsey/mpich-base:3.1.4_ubuntu18.04. All of the required commands can be found in the directory path of the first OpenFoam example, in the script benchmark_pawsey.sh:

#!/bin/bash -l

#SBATCH --job-name=mpi

#SBATCH --nodes=2

#SBATCH --ntasks=2

#SBATCH --ntasks-per-node=1

#SBATCH --time=00:20:00

image="docker://quay.io/pawsey/mpich-base:3.1.4_ubuntu18.04"

osu_dir="/usr/local/libexec/osu-micro-benchmarks/mpi"

# this configuration depends on the host

module unload xalt

module load singularity

# see that SINGULARITYENV_LD_LIBRARY_PATH is defined (host MPI/interconnect libraries)

echo $SINGULARITYENV_LD_LIBRARY_PATH

# 1st test, with host MPI/interconnect libraries

srun singularity exec $image \

$osu_dir/pt2pt/osu_bw -m 1024:1048576

# unset SINGULARITYENV_LD_LIBRARY_PATH

unset SINGULARITYENV_LD_LIBRARY_PATH

# 2nd test, without host MPI/interconnect libraries

srun singularity exec $image \

$osu_dir/pt2pt/osu_bw -m 1024:1048576

Basically we’re running the test twice, the first time using the full bind approach configuration as provided by the singularity module on the cluster, and the second time after unsetting the variable that makes the host MPI/interconnect libraries available in containers.

Here is the first output (using the interconnect):

# OSU MPI Bandwidth Test v5.4.1

# Size Bandwidth (MB/s)

1024 2281.94

2048 3322.45

4096 3976.66

8192 5124.91

16384 5535.30

32768 5628.40

65536 10511.64

131072 11574.12

262144 11819.82

524288 11933.73

1048576 12035.23

And here is the second one:

# OSU MPI Bandwidth Test v5.4.1

# Size Bandwidth (MB/s)

1024 74.47

2048 93.45

4096 106.15

8192 109.57

16384 113.79

32768 116.01

65536 116.76

131072 116.82

262144 117.19

524288 117.37

1048576 117.44

Well, you can see that for a 1 MB message, the bandwidth is 12 GB/s versus 100 MB/s, quite a significant difference in performance!

DEMO: Run a deep learning distributed training on GPUs with containers

NOTE: this part was executed on the Pawsey Topaz cluster with Nvidia GPUs. You can follow the outputs here.

For our example we are going to use Tensorflow, a quite popular deep learning package, among the ones that have been optimised to run on GPUs through Nvidia containers.

To start, let us cd to the tensorflow example directory:

cd ~/isc-tutorial/exercises/tensorflow

This example uses the model described in the Tensorflow tutorials for distributed training using Keras: Distributed training with Keras. The model uses the tf.distribute.MirroredStrategy to perform in-graph replication with synchronous training on many GPUs on one node, and has been defined in the python script: distributedMNIST.py . As the name of the script suggests, it uses the classical MNIST dataset, which is used to train a model to recognise handwritten single digits between 0 and 9.

Now, from a Singularity perspective, all we need to do to run a GPU application on Nvidia GPUs from a container is to add the runtime flag --nv. This will make Singularity look for the Nvidia GPU driver and libraries in the host, and mount them inside the container. Hence, when running GPU applications through Singularity, the only requirement on the host system side is a working installation of the Nvidia drivers and libraries for the GPU card (the driver is typically called libcuda.so.<VERSION>). All of these are normally located in some library subdirectory of /usr.

Finally, GPU resources are usually made available in HPC systems through schedulers, to which Singularity natively and transparently interfaces. So, for instance let us have a look in the current directory at the Slurm batch script called gpu_pawsey.sh:

#!/bin/bash -l

#SBATCH --job-name=tf_distributed

#SBATCH --partition=gpuq

#SBATCH --nodes=1

#SBATCH --gres=gpu:2

#SBATCH --tasks-per-node=1

#SBATCH --cpus-per-task=16

#SBATCH --time=01:00:00

###---Singularity settings

theImage="docker://nvcr.io/nvidia/tensorflow:22.04-tf2-py3"

module load singularity

###---Python script containing the training procedure

theScript="distributedMNIST.py"

###---Launching the distributed tensorflow case

srun singularity exec --nv -e -B fake_home:$HOME $theImage python $theScript

Here the key aspect is to combine the Slurm command srun with singularity exec --nv <..> (similar to what we did in the episode on MPI):

srun singularity exec --nv $image python <..>

In addition, we ask for all the GPUs available in a single node (2 in this case), along with a single master task. The distribution of work and communication among the GPUS is managed by Tensorflow through the Nvidia toolkit. But what really concerns to this training is the meaning of the various runtime options that are added to the singularity command:

--nvhas already been explained above.-eavoids the propagation of any environment variable of the host towards the container (except for those explicilty defined through Singularity-specific syntax). This is the recommended way to execute any Python container in order to avoid contamination of the internal Python environment (see previous episode for more information).-B fake_home:$HOMEthis is also related to best practices for containerised Python. For this application, Python tries to download the MNIST dataset into the user’s home directory, which is often not bind mounted by default by the system administrators, therefore generating an error when attempting to write the files. To solve this issue, we do not recommend to bind mount the home directory for containers execution. Instead, we recommend to use an auxiliary directory (calledfake_homein this case) and bind mount it as the container$HOME. In this way, Python applications will write into this auxiliary directory instead of the real home.

We can submit the script with:

sbatch gpu_pawsey.sh

If on Perlmutter, you can submit the corresponding script instead, specifying your NERSC project name:

sbatch -A <NERSC-project> gpu_perlmutter.sh

Several outputs are produced, including the directories training_checkpoints, saved_model, logs and, as mentioned above, the dataset and other Python files hidden output under the fake_home.

ls -ltr

total 168

-rw-rw----+ 1 espn pawsey 4139 May 20 13:34 distributedMNIST.py

drwxrws---+ 4 espn pawsey 4096 May 20 14:09 fake_home

-rw-rw----+ 1 espn pawsey 514 May 20 14:25 gpu_pawsey.sh

drwxr-s---+ 3 espn pawsey 4096 May 20 14:26 logs

drwxr-s---+ 2 espn pawsey 4096 May 20 14:27 training_checkpoints

-rw-rw----+ 1 espn pawsey 142944 May 20 14:27 slurm-311273.out

drwxr-s---+ 4 espn pawsey 4096 May 20 14:27 saved_model

-rw-rw----+ 1 espn pawsey 8112 May 20 16:20 typical_output.out

For this demo, a typical_output.out file is also provided, where you can check that both GPUs in the node were used during the training of the model.

Optional DEMO: Run a molecular dynamics simulation on a GPU with containers

NOTE: this part was executed on the Pawsey Topaz cluster with Nvidia GPUs. You can follow the outputs here.

For our example we are going to use Gromacs, a quite popular molecular dynamics package, among the ones that have been optimised to run on GPUs through Nvidia containers.

To start, let us cd to thegromacsexample directory:cd ~/isc-tutorial/exercises/gromacsThis directory has got sample input files picked from the collection of Gromacs benchmark examples. In particular, we’re going to use the subset

water-cut1.0_GMX50_bare/1536/.Now, from a Singularity perspective, all we need to do to run a GPU application on Nvidia GPUs from a container is to add the runtime flag

--nv. This will make Singularity look for the Nvidia drivers in the host, and mount them inside the container.

Then, on the host system side, when running GPU applications through Singularity the only requirement consists of the Nvidia driver for the relevant GPU card (the corresponding file is typically calledlibcuda.so.<VERSION>and is located in some library subdirectory of/usr).

Finally, GPU resources are usually made available in HPC systems through schedulers, to which Singularity natively and transparently interfaces. So, for instance let us have a look in the current directory at the Slurm batch script calledgpu_pawsey.sh:#!/bin/bash -l #SBATCH --job-name=gpu #SBATCH --partition=gpuq #SBATCH --gres=gpu:1 #SBATCH --ntasks=1 #SBATCH --time=01:00:00 image="docker://nvcr.io/hpc/gromacs:2018.2" module load singularity # uncompress configuration input file if [ -e conf.gro.gz ] ; then gunzip conf.gro.gz fi # run Gromacs preliminary step with container srun singularity exec --nv $image \ gmx grompp -f pme.mdp # Run Gromacs MD with container srun singularity exec --nv $image \ gmx mdrun -ntmpi 1 -nb gpu -pin on -v -noconfout -nsteps 5000 -s topol.tpr -ntomp 1Here, there are two key execution lines, who run a preliminary Gromacs job and the proper production job, respectively.

See how we have simply combined the Slurm commandsrunwithsingularity exec --nv <..>(similar to what we did in the episode on MPI):srun singularity exec --nv $image gmx <..>We can submit the script with:

sbatch gpu_pawsey.shA few files are produced, including the main output of the molecular dynamics run,

md.log:ls -ltrtotal 139600 -rw-rw----+ 1 mdel pawsey 664 Nov 5 14:07 topol.top -rw-rw----+ 1 mdel pawsey 950 Nov 5 14:07 rf.mdp -rw-rw----+ 1 mdel pawsey 939 Nov 5 14:07 pme.mdp -rw-rw----+ 1 mdel pawsey 556 Nov 5 14:07 gpu_pawsey.sh -rw-rw----+ 1 mdel pawsey 105984045 Nov 5 14:07 conf.gro -rw-rw----+ 1 mdel pawsey 11713 Nov 5 14:12 mdout.mdp -rw-rw----+ 1 mdel pawsey 36880760 Nov 5 14:12 topol.tpr -rw-rw----+ 1 mdel pawsey 9247 Nov 5 14:17 slurm-101713.out -rw-rw----+ 1 mdel pawsey 22768 Nov 5 14:17 md.log -rw-rw----+ 1 mdel pawsey 1152 Nov 5 14:17 ener.edr -rw-rw----+ 1 mdel pawsey 8112 Nov 5 14:17 typical_output.outFor this demo, a

typical_output.outfile is also provided, where you can check that the GPU was used during the simulation.

Key Points

Appropriate Singularity environment variables can be used to configure the bind approach for MPI containers (sys admins can help); Shifter achieves this via a configuration file

Singularity and Shifter interface almost transparently with HPC schedulers such as Slurm

MPI performance of containerised applications almost coincide with those of a native run

You can run containerised GPU applications with Singularity using the flags

--nvor--rocmfor Nvidia or AMD GPUs, respectively